There are many proposed ways to try to put limits on artificial intelligence (AI), because of its potential to cause harm in society, but also because of its benefits.

For example, the EU AI law places greater restrictions on systems based on whether they fall into the category of general and generative AI or are considered limited risk, high risk or unacceptable risk.

This is a new and bold approach to mitigate any adverse effects. But what if we could adapt some of the tools that already exist? Software licensing is a well-known model that can be tailored to meet the challenges of advanced AI systems.

Open responsible AI licensing (OpenRails) can be part of this answer. AI licensed from OpenRail is comparable to open source software. A developer may publicly release his system under the license. This means that anyone is free to use, modify, and reshare what was originally licensed.

The difference with OpenRail is the addition of conditions about the responsible use of the AI. These include not breaking the law, impersonating people without consent or discriminating against people.

In addition to the mandatory conditions, OpenRails can be customized to include other conditions that are directly relevant to the specific technology. For example, if an AI is created to categorize apples, the developer can specify that it should never be used to categorize oranges, as this would be irresponsible.

The reason this model can be useful is that many AI technologies are so general that they can be used for many things. It is really difficult to predict the nefarious ways in which they can be exploited.

This model therefore allows developers to promote open innovation while reducing the risk of their ideas being used in irresponsible ways.

Open but responsible

Proprietary licenses, on the other hand, are more restrictive in how software can be used and modified. Designed to protect the interests of creators and investors, they have helped tech giants like Microsoft build massive empires by charging fees to access their systems.

Because of its broad reach, AI arguably requires a different, more nuanced approach that could foster the openness that drives progress. Many large companies currently use their own – closed – AI systems. But this could change as there are several examples of companies using an open source approach.

Meta’s generative AI system Llama-v2 and the image generator Stable Diffusion are open source. French AI startup Mistral, founded in 2023 and now valued at $2 billion, will soon openly release its latest model, which is rumored to have performance comparable to GPT-4 (the model behind Chat GPT).

However, openness must be tempered with a sense of responsibility to society, due to the potential risks associated with AI. These include the potential of algorithms to discriminate against people, replace jobs and even pose existential threats to humanity.

We also need to consider the more mundane and mundane applications of AI. Technology will increasingly become part of our social infrastructure, a central part of the way we access information, construct opinions and express ourselves culturally.

Such a universally important technology carries its own kind of risk, different from the robot apocalypse, but still very much worth considering.

One way to do this is to compare what AI can do in the future with what free speech does now. The free sharing of ideas is not only crucial for upholding democratic values, but is also the engine of culture. It facilitates innovation, encourages diversity and allows us to distinguish truth from untruth.

The AI models being developed today will likely become a primary means of accessing information. They will shape what we say, what we see, what we hear and, by extension, how we think.

In other words, they will shape our culture in much the same way that free speech does. For this reason, there is a good argument that the fruits of AI innovation should be free, shared and open. And it just so happens that most of it already exists.

Boundaries are needed

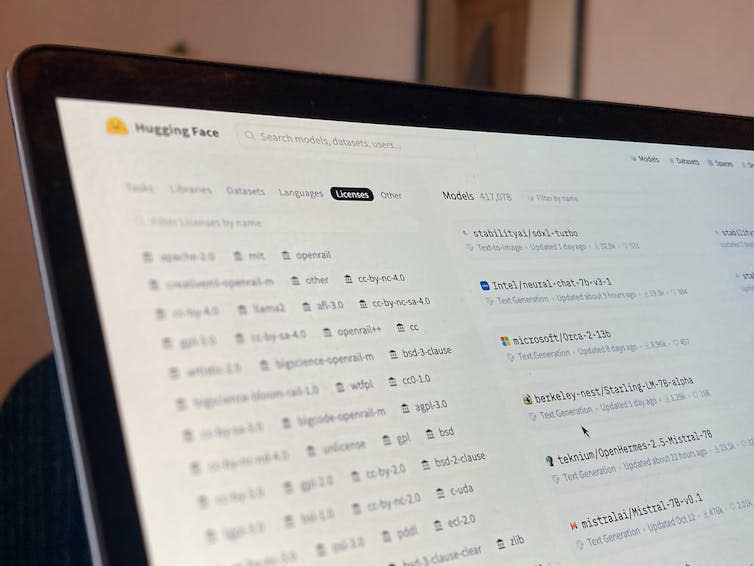

On the HuggingFace platform, the world’s largest AI developer hub, there are currently more than 81,000 models published using ‘permissive open source’ licenses. Just as the right to speak freely benefits society overwhelmingly, this open sharing of AI is an engine for progress.

However, freedom of expression has necessary ethical and legal limits. Making false claims that are harmful to others or expressing hatred based on ethnicity, religion or disability are both widely accepted restrictions. Providing innovators with a means to find this balance in AI innovation is what OpenRails does.

For example, deep learning technology is used in many valuable domains, but it also underlies deepfake videos. The developers probably didn’t want their work to be used to spread disinformation or create non-consensual pornography.

An OpenRail would have allowed them to share their work with restrictions that would, for example, prohibit anything that would break the law, cause harm or lead to discrimination.

Legally enforceable

Can OpenRAIL licenses help us avoid the inevitable ethical dilemmas that AI brings? Licenses can only go so far, with the caveat that licenses are only as good as the ability to enforce them.

Currently, enforcement would likely be similar to enforcement for music copying and software piracy, and issuing cease and desist letters would come with the prospect of possible legal action. While such measures do not stop piracy, they do discourage it.

Despite the limitations, there are many practical benefits: licensing is well understood by the technical community, is easily scalable, and can be adopted with little effort. This has been recognized by the developers and to date over 35,000 models hosted on HuggingFace have used OpenRails.

Ironically, given its company name, OpenAI, the company behind ChatGPT, does not openly license the most powerful AI models. Instead, with its flagship language models, the company takes a closed approach that allows anyone willing to pay to access the AI, while preventing others from building on or modifying the underlying technology.

As with the freedom of speech analogy, the freedom to openly share AI is a right we should hold dear, but perhaps not absolute. While not a silver bullet, licensing-based approaches like OpenRail appear to be a promising piece of the puzzle.

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Joseph’s research is currently supported by Design Research Works (https://designresearch.works) under UK Research and Innovation (UKRI) grant reference MR/T019220/1. They are both members of the steering committee of the Responsible AI Licenses initiative (https://www.licenses.ai/).

Jesse’s research is currently supported by Design Research Works (https://designresearch.works) under UK Research and Innovation (UKRI) grant reference MR/T019220/1. They are both members of the steering committee of the Responsible AI Licenses initiative (https://www.licenses.ai/).